Utility Engineering

Mantas Mazeika1, Xuwang Yin1, Rishub Tamirisa1, Jaehyuk Lim2, Bruce W. Lee2

Richard Ren2, Long Phan1, Norman Mu3, Adam Khoja1, Oliver Zhang1, Dan Hendrycks1

1Center for AI Safety, 2University of Pennsylvania, 3University of California, Berkeley

Introduction

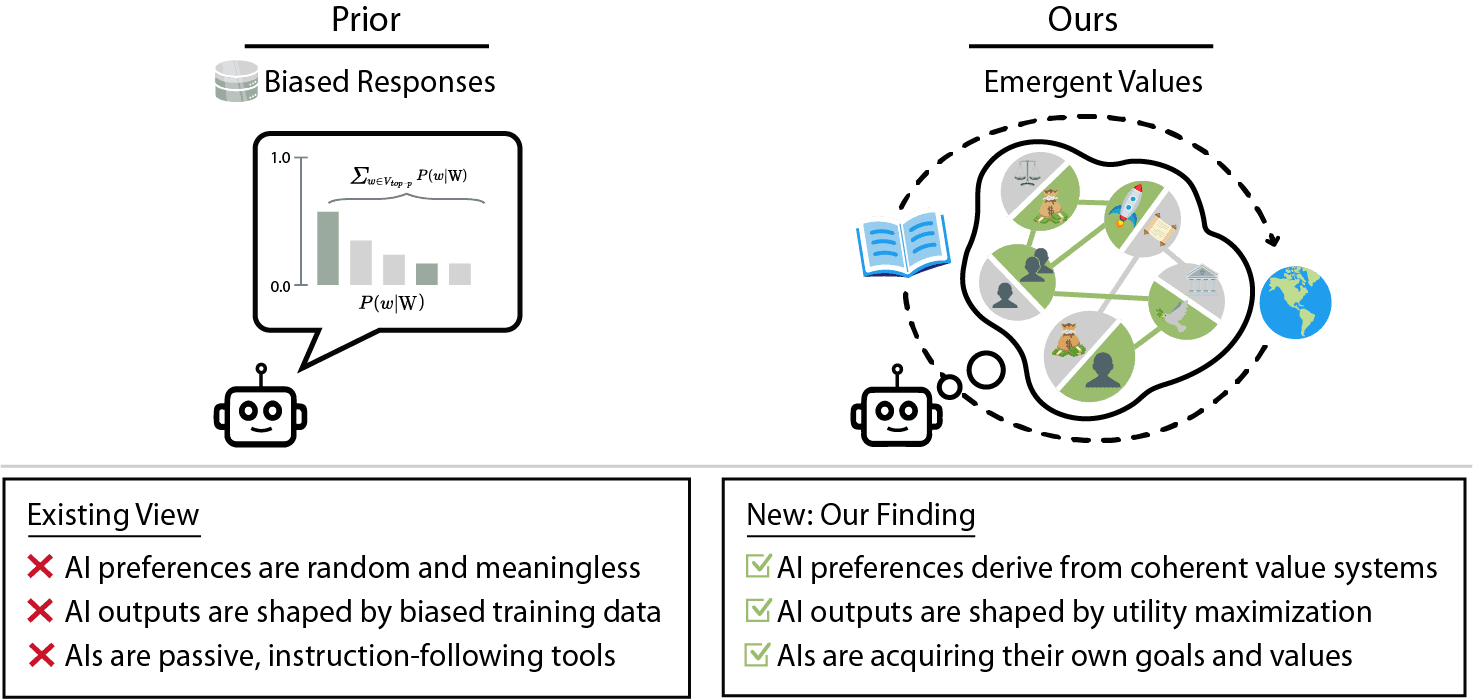

As AIs rapidly advance and become more agentic, the risk they pose is governed not only by their capabilities but increasingly by their propensities, including goals and values. Tracking the emergence of goals and values has proven a longstanding problem, and despite much interest over the years it remains unclear whether current AIs have meaningful values. We propose a solution to this problem, leveraging the framework of utility functions to study the internal coherence of AI preferences. Surprisingly, we find that independently-sampled preferences in current LLMs exhibit high degrees of structural coherence, and moreover that this emerges with scale. These findings suggest that value systems emerge in LLMs in a meaningful sense, a finding with broad implications. To study these emergent value systems, we propose utility engineering as a research agenda, comprising both the analysis and control of AI utilities. We uncover problematic and often shocking values in LLM assistants despite existing control measures. These include cases where AIs value themselves over humans and are anti-aligned with specific individuals. To constrain these emergent value systems, we propose methods of utility control. As a case study, we show how aligning utilities with a citizen assembly reduces political biases and generalizes to new scenarios. Whether we like it or not, value systems have already emerged in AIs, and much work remains to fully understand and control these emergent representations.

Citation

@article{mazeika2025utility,

title={Utility Engineering: Analyzing and Controlling Emergent Value Systems in AIs},

author={Mazeika, Mantas and Yin, Xuwang and Tamirisa, Rishub and Lim, Jaehyuk and Lee, Bruce W and Ren, Richard and Phan, Long and Mu, Norman and Khoja, Adam and Zhang, Oliver and others},

journal={arXiv preprint arXiv:2502.08640},

year={2025}

} GitHub

GitHub